I’ve been into self-help books recently, and I’ve just finished reading Four Thousand Weeks by Oliver Burkeman. I really enjoyed it, so I’ve compiled a list of learnings that was super helpful for me and wanted to share about it.

1. You won’t have time to do everything that you want to do

On average you only have 4000 weeks on earth, you cannot possibly do everything that you want to do. Many of us imagine time as a conveyor belt that is constantly passing us by, each hour or week is like a container carried on the belt, which we feel compelled to fill up as it passes if we were to feel that we’re making good use of our time. When there are too many things to fit in the container, we feel unpleasantly busy, when there are too few, we feel bored. If we keep pace with the passing containers, we congratulate ourselves for “staying on top of things” and feel like we’ve justified our existence; if we let too many pass by unfilled, we feel we’ve wasted them.

The more we attempt mastery over time, to attain the feeling of total control over your time, the more we face the inevitable constraints of being human. Embrace your limitations as a human, that you will most definitely not have the time for everything that you want to do — “and so, at the very least, you can stop beating yourself up for failing”.

2. Eigenzeit – Proper Time

time inherent to a process itself

Meaningful productivity: not hurrying things but for letting things take the time that they should. We often feel busy because we try to do more in less time. But many things/processes has a set amount of time defined by the nature of it, rushing it only frustrates you.

Farmers don’t feel idle when they are not working because they know that they cannot hurry the earth

However long it takes for harvest is however long it is going to take. Just like you can’t rush a pregnancy, there are many things in life where we need to learn to let things run its course and stop rushing.

(cue the math question about how long giving birth will take with 1 versus 9 women)

3. Freedom through commitments; Joy of missing out

When you commit, you foreclose the possibility of the future; who could say if that possibility is any better? But it is this act of commitment that makes the choice meaningful. Choosing the right things to commit to frees you up to do things that truly matters to you.

Joy of missing out, the opposite of FOMO. If you don’t choose what to miss out on, then your choices can’t mean anything.

4. We don’t feel like doing things that are truly important to us

It sounds paradoxical, but because important things matter to us, therefore:

- We are forced to face our limits

- We experience discomfort because we value the task at hand

- We use distractions to seek relief from confronting our limitations

Antidote is to submit to this unpleasantness, this is what it feels like for finite humans to commit to valuable tasks. (e.g. I find it somewhat unpleasant writing this reflection even though this is valuable to me)

5. Rest for the sake of resting ⭐

Increasingly, “we are the kind of people who don’t actually want to rest”, who find it seriously unpleasant to pause in our efforts to get things done, and get anxious when we don’t feel like we’re sufficiently productive.

The purpose of rest is not to recover so that you can work harder tomorrow. Rest for the sake of resting, enjoy the moment.

(consider) the possibility that today, at least, there might be nothing more you need to do in order to justify your existence.

It is not wasteful to rest. Not every moment spent awake should be put towards personal growth.

This resonated deeply with me.

6. Work expands so as to fill time available for its completion

There is no reason to believe you’ll ever feel “on top of things”. The more you try to get done, the more there will be to do.

when housewife get access to washing machines and vacuum cleaners, they didn’t save time cleaning because society’s standards of cleanliness simply rose to offset the benefits

It’s not that you never get through your email, it’s the process of “getting through your email” actually generates more email. This relates to “maximisers vs satisficers”. It’s near impossible to get maximise 100% on anything, so you’ll always feel like you fall short, but if you learn to accept things at satisfactory level, you’ll find life to be a lot more pleasant.

7. Learn to say “no”, the hard kind of no

We all know about saying “no”. It’s easy to say no to the things you don’t want to do, but it’s much harder to say “no” to the things that you actually want to do. Just like how spending the time writing right now means that I am forgoing spending time with my family, or any other things that I would like to do.

the core challenge of managing our limited time isn’t about how to get everything done—that’s never going to happen—but how to decide most wisely what not to do, and how to feel at peace about not doing it

8. Limit your work in progress

If you have too many different things to work on, you end up finishing none of it, feel bad about it, and the cycle goes on.

Since you only have finite time, prioritise your tasks. Consciously choose to forgo the things that you want to do, to make space for the truly important ones.

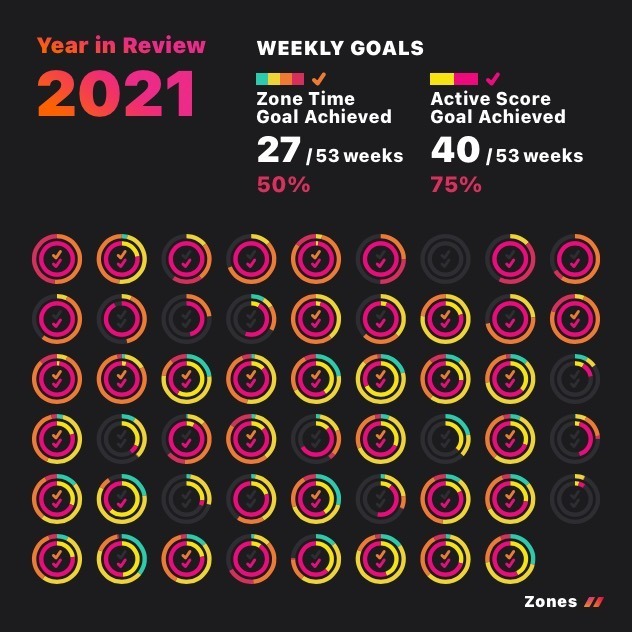

Personally, I’ve chosen to limit my active projects to only 3 items. It’s the whole reason why I was able to write this entry at all.

9. Do the next and most necessary thing

This relates to the “fog of the future” idea that you can only choose the best possible option given what you know at the moment. So don’t be too hard on yourself when you realised that you’ve made the wrong choice in retrospect, it was the best you could do given what you knew.

Honestly the “next and most necessary thing” is all that any of us can aspire to do in any moment. “And we must do it despite not having any objective way to be sure what the right course of action even is.”

10. Embrace your limitations

Embracing your limits means giving up hope that with the right techniques, and a bit more effort, you’d be able to meet other people’s limitless demands, realise your every ambition, excel in every role, or give every good cause or humanitarian crisis the attention it seems like it deserves. It means giving up hope of ever feeling totally in control, or certain that acutely painful experiences aren’t coming your way. And it means giving up, as far as possible, the master hope that lurks beneath all this, the hope that somehow this isn’t really it—that this is just a dress rehearsal, and that one day you’ll feel truly confident that you have what it takes.

The author summarised the book a lot better than I could ever hope to.

I’ve had a great time reading this book because so many of the ideologies and examples are highly relatable for me. I highly recommend reading this book because it has actually influenced how I think and affected how I approach productivity, and learning to embrace my limits as a human.